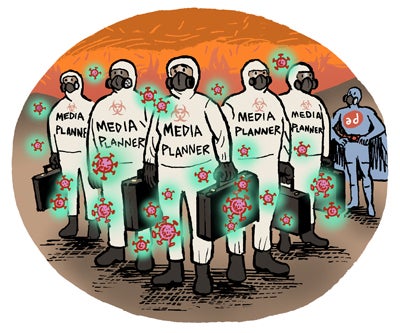

There’s still no vaccine for COVID-19 misinformation.

Ad networks, exchanges and demand-side platforms, including Google and Revcontent, are letting misinformation related to the virus slip through their systems.

In a study examining the monetization of 1,000 questionable websites between January and February, Google was found to be responsible for 69% of ads placed against stories making false claims about COVID-19.

That’s not surprising considering Google’s market share. The other ad platforms uncovered by the study, which was conducted by AdVerif.AI, an Israeli startup working to develop tools to counter hate speech and fake news, include: Revcontent (associated with 7% of ads funding virus misinformation); OpenX, The Trade Desk and Infolinks (4% a piece); Criteo (3%); and PubMatic, Taboola and Xandr (2% each).

AdVerif.AI visited each of the 1,000 iffy sites in its study 10 times a day for a period of two months generating around 600,000 impressions overall.

In other words, this is just a sliver of what’s out there.

Cracks in the system

The underbelly of the internet still attracts dollars from unwitting advertisers because companies like Integral Ad Science and DoubleVerify can automatically block classic brand safety problems like nudity and violence, but detecting misinformation is trickier, said Or Levi, CEO AdVerif.AI, which he co-founder in 2017 with Reenah Nahum, the former lead data scientist at mobile ad tech company Bidalgo.

“The technology isn’t yet at the stage where you can automatically deal with misinformation like you’d deal with spam or brand safety using domain block lists and keyword blocking,” said Levi.

It’s not always clear whether content contains misinformation, even to a human reader, so it’s necessary to analyze stories using semantic text models and psycholinguistics – the study of how people process language – in order to identify manipulative speech and false narratives.

AdVerif.AI also uses a machine learning algorithm called FakeRank to uncover websites spreading misinformation by looking at the connections between sites flagged by Poynter and other reputable fact-checking networks.

“A key part of the challenge for ad platforms is that hate speech and disinformation are so nuanced and rapidly changing,” Levi said.

Man and machine

Using AI to detect misinformation is also tricky, because it can be biased towards its designers and the input data, said Marc Goldberg, CRO of web analytics and bot detection company Method Media Intelligence, and that means AI models need human input.

“The hate lexicon has evolved beyond a few common words, although it can be easily determined as hate when paired with a human and context,” said Goldberg.

“COVID misinformation is new territory,” he said. “And since we don’t understand the virus to start with, only the most egregious misinformation can be identified by both humans and technology and applied to models.”

In one instance, AdVerif.AI’s algorithm found Bentley ads on far-right financial blog zerohedge.com served by both Google and Revcontent on a story about a laboratory accident being the most likely cause of the COVID-19 pandemic.

In another, ads for Fortinent, a public company that sells cybersecurity solutions, ran adjacent to a post entitled “The Covid19 Riots And Race Connection: The Elite Have Pushed The Button” on a site called illuminatipuppet.net. Google and Criteo were the ad platforms in that case.

“We should consider this an adversarial cat-and-mouse game,” Levi said. “There are many ad platforms and these sites are good at finding the loopholes in the vetting process.”

For example, proactive pre-bid filters can help, but they need to be combined with post-bid verification.

“Site inclusion lists should be standard operating procedure for buyers, but it’s important to remember that true enforcement can only be done post-bid,” Goldberg said. “Having a pre-bid filter will not be enough.”

The cat-and-mouse game continues

The cat-and-mouse game continues

Levi acknowledged that the numbers in his study are estimates and that it’s difficult for a third-party to discern exactly how much misinformation, COVID-19 or otherwise, is being monetized across the web. Site traffic and ad-serving dynamics fluctuate.

But while most brands are aware of the existence of widespread misinformation online, they’re often not aware that it’s their own ad dollars directly funding the problem.

And there’s no easy button to fix it.

Even Facebook, which has billions of dollars at its disposal, hasn’t fully automated the process of misinformation detection, Levi said, pointing to Mark Zuckerberg’s 2018 Congressional testimony when he said he could envision AI taking a primary role in detecting hate speech on Facebook within five to 10 years.

A Google spokesperson told AdExchanger that it has “strict publisher policies” in place against promoting content and misinformation about the pandemic and that when Google finds content that violates these policies, “we remove its ability to monetize.” Google also referenced its advertiser controls that give brands the ability to decide where their ads run, including the ability to exclude specific websites or entire topics.

Criteo told AdExchanger that it’s working with ad verification partners and brand safety vendors, including Oracle Data Cloud on the web and Pixalate, in apps, to protect its supply quality globally. PubMatic told AdExchanger that it, too, partners with ad fraud and inventory quality verification vendors, and that its approval process combines manual and automated reviews for all of the inventory on its exchange. Revcontent told AdExchanger that it “enforces stringent quality standards” for all of the publishers it accepts into its network and that it denies more than 90% of applications.

Yet, why are shady publishers able to slide past all of these controls?

Some more established websites, like Gateway Pundit, for example, a conservative site flagged by NewsGuard for distorting information and spreading hoaxes, don’t appear to meet Google’s bar for problematic content. All you have to do is click on an AdChoices icon to see that Gateway Pundit monetizes with Google.

And other more fly-by-night sites are just too slippery.

“Once one of these sites has been discovered, they put up a new site with a new domain and continue on with the same practices,” AdVerif.AI’s Levi said. “It might take a few months for an ad network to identify what’s going on and by then they’ve made a good sum and moved on to the next site.”

Which is part of why this is an ongoing battle, said Bob Regular, CEO of Infolinks.

“Fighting disinformation is a complicated issue that the brightest minds from AI to national security haven’t figured out a way to definitively tackle,” Regular said.

(But hope springs eternal. The CEOs of Facebook, Google and Twitter (Zuckerberg, Pichai & Dorsey, LLC) are scheduled to testify before Congress on Thursday in an effort to get to the bottom of social media’s role in promoting extremism and misinformation.)