Despite the tech company takeover of Cannes, the ad industry’s current infatuation – artificial intelligence – confined its appearances to panels and presentations.

Despite the tech company takeover of Cannes, the ad industry’s current infatuation – artificial intelligence – confined its appearances to panels and presentations.

But a few AI aspirations (“deployments” is too strong a word in many cases) are worth calling out.

Tencent

The Chinese maker of the popular WeChat application has a machine learning agenda that rivals Google and IBM.

WeChat wants to build expertise in machine learning across a range of functionalities: advanced speech recognition (understanding spoken language); natural language processing (understanding the context behind unstructured text or speech); and computer vision (facial, image and video recognition). All of these capabilities are built on a machine learning foundation, so the systems are designed to improve with use.

Tencent then hopes to apply this tech to an array of applications, including gaming AI, chatbots, smart assistants, social ads and video searches.

The ambition makes sense: Tencent is more than WeChat. Beyond social networking, its tendrils extend into news content, games and entertainment, a mobile browser, financial services and Wi-Fi services. Its social ads business has also risen dramatically in the past year, a spokesperson said.

Because of all of those different use cases, and all of the data Tencent collects, it needs artificial intelligence. That why two years ago, the company’s C-levels decided to invest in AI as a core technology.

Last year, Tencent built an AI lab with 50 scientists doing AI research and 100 engineers who could apply it. The spokesperson said that’s actually small compared to similar teams at Google and Chinese search engine Baidu, but it’s a start.

The lab lets Tencent apply AI technologies quickly across its entire portfolio, and it’s already pushed image recognition into its QQ app to improve photo transfers.

Eventually, Tencent wants to offer its AI through a platform model so that if another vendor needs an instance of gaming AI or intelligence for a chatbot, it can get the core tech from Tencent. It’s very similar to how IBM has been offering Watson through Bluemix.

The additional benefit for Tencent is that in opening up its AI capabilities for other developers, it can collect more data and become more intelligent.

Annalect

Omnicom’s data and marketing sciences group, Annalect Utility Bot Interface (AUBI), made its debut at Cannes. During the demo, when it wasn’t being undermined by the venue’s crappy Wi-Fi signal, the bot responded to typed queries about where to find great rosé or the nearest party.

That was just to show off to the Cannes crowd. AUBI’s real purpose is to empower Omnicom’s nondata wonks to use more data. Remember how at last year’s Cannes, creative agencies like BBDO were starting to get into programmatic, and how BBDO even has a director of data solutions to help creatives and other nonquants use data?

AUBI is another step toward facilitating data use across Omnicom’s employees. So, if a creative staffer or a media planner has a question about a certain audience’s tendencies, they can punch it into AUBI’s text field, and voilà: an answer.

At least that’s the hope. AUBI hasn’t yet been deployed widely within Omnicom, and whether or not its agencies decide to use it will be the real test.

IBM Watson/Weather Channel And Soul Machines

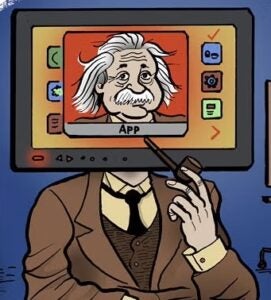

If you’ve seen “Avatar” or “Rise of the Planet of the Apes,” you’ve seen Dr. Mark Sagar’s work.

For those films, Sagar won two Oscars for his facial motion capture work. But at a Cannes event hosted by MEC, Sagar was repping his startup Soul Machines, which creates avatars – or in his preferred parlance, “digital humans” – to be used as customer service representatives. Watson, of course, provides the AI.

Despite the viability of video conferencing, contact centers still rely on voice calls. But the problem with video conferencing is that it presumes the service rep is well-groomed and camera-ready, and – let’s be honest – that’s just not everyone’s forte.

Sagar insists his digital humans aren’t meant to replace service reps. Rather, like automated contact centers, they can relieve human employees of more menial tasks.

Digital humans, however, are lifelike and are designed to mimic emotion to establish a human-like connection. Are you calling because your credit card was stolen? The digital human will look sad. Are you ordering flowers to celebrate your 50th wedding anniversary? The digital human will duly look happy.

Sagar said his digital humans are already in a handful of pilots and that the solution is scalable, not particularly cost-prohibitive and highly customizable.

But actual nondigital humans are still involved, at least in the testing phase. For a project Sagar is doing with the Australian government working with people with disabilities, for example, the deployment is highly curated, with psychologists ensuring that the digital humans respond to human users in the best way possible.

Of course, another test will be to see whether digitized faces, despite recent advancements, have fully crossed the uncanny valley – at least enough for most consumers to accept.