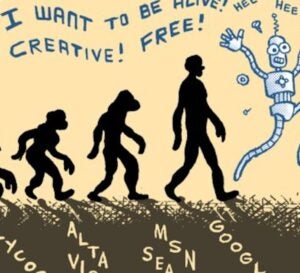

Bubbling around and through the advertising ecosystem is what some have called “Big Data.” Is it demo data? Location data? Or data from that little mouseover you just did with the graphic appended to this post? – It seems like it’s any piece of data we can think of, no?

Bubbling around and through the advertising ecosystem is what some have called “Big Data.” Is it demo data? Location data? Or data from that little mouseover you just did with the graphic appended to this post? – It seems like it’s any piece of data we can think of, no?

Time for some ecosystem input!

With previous ideas on the definitions for programmatic buying, programmatic selling and real-time bidding, we reached out to a group of executives who get their hands dirty with data everyday and asked them:

“What is ‘Big Data’?”

Click below or scroll down for more:

- Phil Mui, Chief Product and Engineering Officer, Acxiom

- Boris Mouzykantskii, Founder, CEO & Chief Scientist, Iponweb

- James Deaker, Vice President of Advertising and Data Solutions, Yahoo!

- Dev Patel, CEO, Bityota

- Mike Driscoll, CEO, Metamarkets

- Jeremy Barnes, Founder & CTO, Datacratic

Phil Mui, Chief Product and Engineering Officer, Acxiom

“The world has always had ‘big’ data. What makes ‘big data’ the catch phrase of 2012 is not simply about the size of the data. ‘Big data’ also refers to the size of available data for analysis, as well as the access methods and manipulation technologies to make sense of the data. For many, ‘Big Table’, ‘Map Reduce’, ‘Hadoop’, ‘No Sql’, among other such technologies are strongly correlated, and often used interchangeably, with ‘big data’. The adoption of these tools and techniques enables one to make sense of the volume, velocity, and variety of big data.

For marketers, ‘big data’ represents a challenge and an opportunity to develop intelligent analytical tools to better reach their customers at the right channel, right time, and right device. Big data promises significant payoffs: it enables marketers to invest proportionally to the value of existing or potential customer relationships, often in real-time. More importantly, big data promotes a culture of data-informed decision making. It is now much harder to justify a decision based solely on ‘intuition’ or ‘gut’ without some data analysis to back it up. If it takes a hyped term to make data-driven decision making pervasive, I am all for it.”

Boris Mouzykantskii, Founder, CEO & Chief Scientist, Iponweb

“Big Data is essentially a rebranding of ‘normal’ data. There is largely nothing new in the data available itself, but with the evolution of computer processing and storage subsystems it has become possible to not only store & interface with the entire data set, but increasingly take advantage of it in real-time.

In the past, storing a small fraction of the most useful data meant investing serious effort into structuring it. As companies began to store all the data that they generate, structuring it became too hard and the challenge shifted to data usage.

Today, Big Data enables us to scan the whole dataset on each query, in parallel, on multiple computers. This is incredibly insightful for analytics, but still takes significant time & computational power. More powerful ways to use this ‘big data’ are now emerging by feeding it into a machine learning engine for analysis. Increasingly this is where it is being used in online advertising to best effect; enabling real-time decisions around audience behavior, impression valuation and user response prediction.”

James Deaker, Vice President of Advertising and Data Solutions, Yahoo!

- Volume of data that exceeds traditional processing methodologies

- Variety of data types and sources

- Velocity of data flowing in and out of the systems

In our industry, big data is such a hot topic because each of these dimensions needs to be addressed effectively to improve the consumer and advertiser experience through better personalization and targeting. It is necessary to understand all of a user’s interactions with web pages and apps, ideally across all the devices they use to access your content. Volume-wise, this generates terabytes and exabytes of historical data. The variety of data is huge because the data formats vary by device, location of the user and mode of interaction. Finally, to benefit the consumer and the advertiser, the data needs to be used in real-time for user content personalization, ad-targeting or real-time bidding.”

Dev Patel, CEO, Bityota

“Big Data can be defined by size/volume, rate of growth, and variety of structure of that data. The definition of Big Data and technologies are inextricably linked, where lack of the latter may force Big Data to peter out into a ‘damp squib.’

Traditional relational database systems were not designed to cope with Big Data. It is the combination of the three dimensions that has given rise to new Big Data technologies broadly in the areas of NoSQL, NewSQL, Storage and Analytics.

Digital Advertising is a classic Big Data phenomenon where data from multiple sources is variable in structure, produced at varying intervals from real-time page/ad views to when a purchase occurs, and its size is in terabytes and petabytes. Data that used to be aggregated and archived can now be used for detailed analysis to improve performance. Analytics for marketing is a key use case of Big Data — where analysts want to be able to collect and store more data and integrate raw, full-detail data from campaigns, social feeds, audience click stream, online and offline purchase histories, etc for programmatic buying and selling, closing the loop, attribution, building predictive behavioral models, etc.”

Mike Driscoll, CEO, Metamarkets

“Big data is data that must be distributed in storage and parallelized in processing. If your data fits in an Excel spreadsheet, it’s small. If it fits on a single traditional server (like MySQL), it’s medium. But if your data is so large that it’s spread across multiple machines, or on specialized hardware with multiple disks, congratulations: you’ve got Big Data.

Big data thus goes hand in hand with cloud-based, distributed storage systems like Amazon’s S3, as well as distributed processing frameworks like Hadoop, and massively parallel processing (MPP) databases such as Oracle’s Exadata and HP’s Vertica.

A fatal mistake for ad tech firms is streaming log data from a cloud of ad servers into a single analytics server, creating a slow, painful bottleneck. When working with Big Data, one’s analytics architecture must be similarly distributed and parallelized.

Here’s a table I developed to describe the differences between small, medium, and big data.”

Jeremy Barnes, Founder & CTO, Datacratic

“‘Big Data’ is about using datasets that are too large for standard solutions like databases. The internet is rich in data and many online businesses have problems dealing with it all. Typical big data solutions use tools like Hadoop to run relatively simple algorithms over vast quantities of data, using lots of computers.

These solutions have the advantage of scaling up to power the very biggest businesses, but are driven by the ‘how’ of implementation instead of ‘what’ problem to solve. Unfortunately, scalability of backend systems is rarely the main business challenge and it comes at a cost.

I feel that by focusing on ‘Big’, many people are missing the point. With some care, a $10,000 server can fit several billion impression records in memory and process them much faster and cheaper than with Hadoop. Is it still Big Data if it’s running on a single computer? Does it matter? You should care that your solution is appropriate, not that your data is big.”

Read more:

- What is programmatic buying?

- What is programmatic selling?

- What is real-time bidding?

- What is ‘Big Data’?

- What is an algorithm?