Cody Plofker, CEO of cosmetics brand Jones Road Beauty, doesn’t mind when a test he’s running proves that a certain channel isn’t working. In fact, he’s pleased.

“You almost want to find out that something doesn’t work,” he said, “because then it’s like, all right, cool, now I know what I can optimize.”

That’s not how most marketers are wired – or how their tools work.

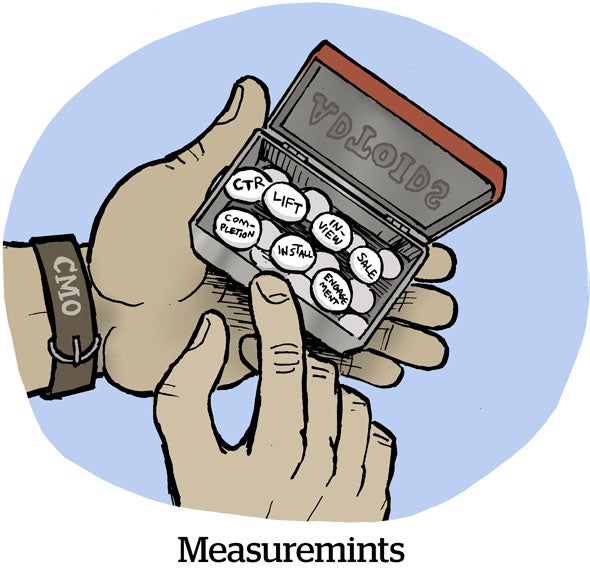

For them, measurement is usually a mashup of platform reporting, media mix modeling, an MTA vendor and the occasional geo-lift test, all claiming to show incrementality and none of them fully agreeing.

“There’s just so much noise and so much confusion,” Plofker said. “So it’s always, ‘Do I trust this or do I trust that?’”

It’s a good question.

A different take on MMM

A little over a year ago, Jones Road Beauty started working with ad measurement startup Haus to get a more consistent read on how its media performs across channels over time.

Not long after, it ended up becoming an early design partner on a new Haus product called Causal MMM, which is a media mix model built around the results of real experiments rather than just correlations.

Most incrementality measurement focuses on a narrow question: If I change this one thing about a campaign, how much extra lift did I see compared with a control group that wasn’t exposed?

Causal MMM pulls multiple test results into one place and uses them to shape the media mix model. Rather than relying on patterns in historical data or correlations, like with traditional MMM, it treats the lift from a brand’s own experiments at specific spend levels as points on a curve, said Olivia Kory, chief strategy officer at Haus.

“Experiments solve that cause-and-effect problem as the foundation of the MMM,” Kory said. “The results anchor the data in such a way that we’re no longer guessing.”

Mix shift

For Jones Road, the beauty is how cleanly this approach plugs into its testing road map.

For example, Plofker’s team has already run experiments on Demand Gen, which is Google’s AI‑driven, PMax‑like product that serves video and display ads across YouTube, Discover and Gmail. They’ve tested it at both $7,000 and $10,000 per day, so they already have a return curve for that channel.

If Haus’s model suggests upping spend to, say, $15,000 per day on YouTube, the recommendation isn’t coming out of a black box. It’s based on real-world lift that Jones Road saw at those lower spend levels.

That’s a departure from how most MMM vendors operate, Plofker said, which is “very ‘just trust me.’”

“You’ll hear, ‘Hey, if you shift your mix this way, you’ll get these or those results,’” he said, “but you don’t really know for sure, and there are also too many variables.”

With causal MMM, the recommendations simply become the next experiment on the road map, rather than a blind bet, and that makes the process more “scientific,” Plofker said.

Rerunning the numbers

Rerunning the numbers

This same logic applies to Plofker’s biggest channel: Meta.

For years, Cody assumed he understood Meta’s impact on performance, because it was such a core part of his spend, but he’d never actually run an incrementality test on it. When he finally did, the results were sobering.

Although Meta was still incremental, Jones Road was overspending, and it turned out that a lot of the revenue was coming from returning customers rather than new acquisitions. “We were spending beyond the point of efficiency,” Plofker said.

That’s why it’s important to have a culture of testing and retesting, especially for big, high-spend channels, Kory said.

Jones Road ended up pulling back some of its spending on Meta’s Advantage+ Shopping Campaigns and shifting more of its budget into mid-funnel campaigns. What started as a single test turned into a multi-month testing road map that reshaped how the brand thinks about Meta’s role in its mix.

Causal MMM also helps Jones Road spot channels that shouldn’t be getting any budget at all. For instance, Plofker tested Google branded search and found that it wasn’t incremental.

“I was a little pissed when I found out I was spending $3,000 a day on something that wasn’t driving the business, but that’s good to know,” he said. “That’s $3,000 a day I no longer have to spend at all or I can put it into something that seems to be doing better, like Demand Gen.”

Reality checks

Jones Road isn’t alone in wrestling with tricky questions about where to spend and when to stop.

But it’s hard to know what to do, Kory said, when traditional MMMs and platforms are telling flattering stories – “they know what marketers want to hear” – but those stories don’t always stand up to scrutiny.

On top of that, there’s what Kory referred to as “that multicollinearity problem” to contend with. In statistics, multicollinearity happens when multiple independent variables are highly correlated, which makes it hard to figure out the individual impact of each.

In practical terms, that means that when brands increase their spending, they often do it across more than one channel simultaneously at times when their business would increase anyway, like during the leadup to Black Friday. They’re convinced their marketing worked, but the uptick in sales is really just thanks to the season.

“We often, unfortunately, have to bring brands back to reality,” Kory said.

But Plofker loves a good reality check.

“We try to have a live test going at all times,” he said.