Bots are slipping through the cracks.

Bots are slipping through the cracks.

Digital security company Are You a Human claims to have identified a number of bots for sale in traffic that’s already been filtered by well-known verification companies.

“The bots we saw through ad units were both on the open exchange and through direct DSP relationships,” said Reid Tatoris, COO and co-founder of Are You a Human.

A number of the more sophisticated bots observed by the company were specifically programmed to trigger mouse movement to avoid detection. Publishers will often check for cursor motion as a proxy for humanness – scrolling over ads, for example, or generating mouse movement in all four quadrants of a web page.

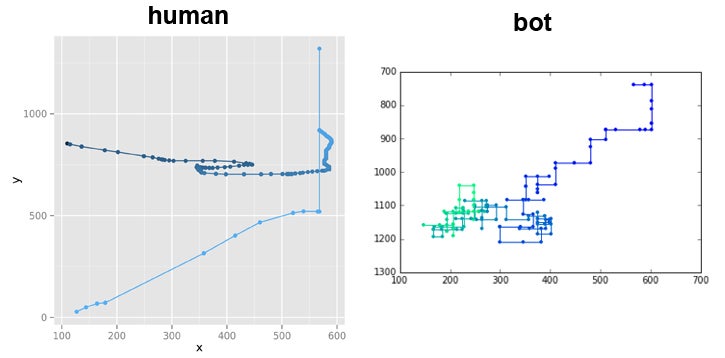

One bot, dubbed “Cardinal bot,” was found to move only according to or based off of variations of the four cardinal directions (north, south, east and west) on every page it visits. Another, the “Circle bot,” moves as its name denotes. A third, “Pentagram bot,” articulates the shape of a five-pointed star within a circle.

When compared to the way humans move about a page, the difference is stark.

Although it’s possible to develop a bot that appears to move randomly, telltale robot-like patterns will emerge within the randomness, while humans generally display what Tatoris called “wandering behavior.”

For example, humans, unlike bots, are likely to make mistakes, like scrolling too quickly and overshooting the object they originally meant to click on, requiring them to backtrack, thereby creating a jittery scroll path.

“It’s not impossible to code something like that, but it’s definitely difficult,” Tatoris said.

Difficult – and basically unnecessary, said White Ops CEO Michael Tiffany. Bot operators aren’t looking to pass the Turing test or create artificial intelligence. They’re looking to fool people for a period of time and siphon as much cash as possible within that window before their bots are found out. Detection is built into a fraudster’s mental math.

“Fraud is a multibillion-dollar industry not because bots are being written by breakthrough AI engineers – smart operators are continuously infecting new machines,” Tiffany said. “The essence of the game is to make enough money between when they freshly infect a new computer and when they ultimately do get caught.”

It’s a continual ebb and flow, said Forensiq CTO Matt Vella. Devices are constantly being infected and cleaned and potentially reinfected. Fraudulent sites are regularly taken out of rotation and new ones placed in their stead.

“A new site is automatically registered and content is scraped and programmatically placed on the page,” Vella said. “If I’m a criminal and I get stopped in my tracks, if the bot master I’m working with is cut out of the exchanges because it’s been discovered, there are plenty of other vehicles and willing associates with which to commit fraud.”

However, not every bot commits fraud. There are black-hat SEO bots, rogue crawlers, content scrapers, bots that forcibly download unwanted content, bots whose express purpose is to flood comment sections with links and spam – not to mention all of the legitimate bots out there, like the ones that index web pages or aggregate prices from various sources. Some verification companies even use bots to go and check whether a particular ad loads.

Although most professed “good bots” will declare themselves as such so they’re not added to the impression count, some are bound to sneak through along with the nefarious ones. More than half of web traffic is automated, with only around 41% found to originate from humans, according to research from Distil Networks.

Which is part of why it’s not altogether surprising that Are You a Human detected bots within traffic that had already been put through a screening process.

“There are so many types of bots written for so many things – and even if you stop all the ad fraud, when the content scrapers or the spam bots hit a page, it’s still an impression,” Tatoris said. “The vast majority of bots have nothing to do with ad fraud – they’re unknown bots and they probably always will be.”

The matter is further complicated by the fact that it’s often difficult to connect the behavior of a particular bot as seen by an ad network to the actual command and control infrastructure sending out orders.

“You could have several botnets that might be running what is essentially the same botnet software, but the owner/operators are different people and they’re doing different things with them,” Tiffany said. “A lot of the operators out there are using a combination of some technology they built themselves and something they bought in the criminal underground.”

And then there’s the fact that different stakeholders have different priorities. Say 30% of a website’s traffic is flagged as high-risk. A publisher might be all right with that number – 70% of the traffic is OK – while an advertiser might be more aggressive, refusing to buy anything from there at all.

“It’s really about false negatives slipping through – how rigorous you’re being and the particular client’s risk threshold,” said Forensiq CEO David Sendroff. “If it’s acceptable to have more false positives, you could be wrong and classify some things as fraud that really aren’t. That means you could catch a lot more fraud, but you also have to be willing to eliminate some of the good traffic with it.”

There is another reason that bots are able to sidle into the general flow, and that’s because of the way bots operate – by compromising a real person’s computer. A real person who is also doing real human things, even as bots are doing what they do in the background, which might include visiting a variety of different websites in order to pick up third-party cookies and tracking pixels in a quest to look like an in-market potential customer.

The lines are blurred.

It’s a bit like buying Twitter followers, Tiffany said. Along with the fake followers, you’ll also get a bunch of real verified Twitter accounts in the mix.

“That’s because there are plenty of people with real Twitter accounts whose computers have been compromised with malware,” Tiffany said. “And that’s why you see so much bot traffic get verified – it looks just like a bona fide person you can trace back to a real profile and a real purchase history.”