Advertisers have been dealing with governance questions for years: privacy rules, data minimization, consent, dark patterns, kids – you name it.

But now AI is adding another layer of complexity, and a lot of that work is landing on the same people who already juggle privacy, security and data governance. There isn’t yet a clear line between privacy and AI governance, which makes basic questions like “Who owns this?” and “What does good look like?” surprisingly hard to answer.

The IAPP has been studying that shift up close.

According to its recent research, 48% of companies say they lack sufficient budget and resources to invest in governance professionals, while 67% say the primary responsibility for AI governance rests with the privacy function.

Taken together, these numbers point to an AI governance role that’s still being defined inside most organizations.

“There isn’t a consistent model yet,” said Ashley Casovan, managing director of the IAPP’s AI Governance Center. “Our research is survey-based and we’re talking to privacy professionals, of course, which introduces a little bias, but even with that caveat, it’s clear that privacy teams are getting pulled into AI governance.”

Casovan spoke with AdExchanger about how AI is reshaping who does governance work and how.

AdExchanger: How are companies structuring their AI governance right now?

ASHLEY CASOVAN: It looks very different from one organization to the next. In some places, AI governance is added onto what privacy people are already doing. In others, the job has evolved so much that it’s essentially a new role where this person is focused almost entirely on AI governance and someone else has taken over the privacy function.

It’s not just privacy, though. For example, cybersecurity and data governance professionals are also being pulled into this work. The mix really depends on the organization. Is it a complex organization, for example, or is it a sector-specific small or medium-size business?

What does AI governance work entail?

It ranges from policy to fairly technical evaluations.

On the policy side, you’re translating high-level principles into concrete rules for how AI can be used and setting up governance structures – committees or boards – so the right people can be at the table to make decisions. There’s also compliance and adhering to standards, which involves implementing frameworks like the NIST AI Risk Management Framework.

Then you have technical work, such as evaluating systems for bias and identifying cybersecurity risks that might come through those systems.

And on top of that is ethics and assurance work, thinking through the broader implications of how systems are used and, in some sectors, building in independent evaluations or audits.

Okay, that’s a lot. How much upskilling is needed to do that work?

You still need a strong understanding of regulation, but the roles we’re seeing also expect people to move beyond a purely compliance lens and understand different ways to do technical evaluations on AI systems.

We’re also seeing assurance teams being pulled into AI work, which raises questions about what training looks like for accountants and internal auditors when they’re asked to review AI systems as part of their job.

You mentioned regulation. California’s focus on automated decision-making feels like a bellwether. Do companies recognize that what California does on automated decision‑making could end up setting the tone nationally?

California is already a test bed for privacy policy, and we’re seeing the same thing with AI and automated decision-making. The state has a large, diverse population, and it’s home to many of the major tech platforms, which I think creates a stronger appetite among regulators.

We often talk about Colorado or New York, but California is where some of the most substantive and mature debates are happening. What gets worked out there on automated decision-making is very likely to influence what other states decide to do.

More and more data is being collected passively by AI‑driven ad tech, and a lot of it doesn’t feel truly consensual. Is conformed consent dead? And what can advertisers do to be accountable?

First, I’ll say that context matters so much.

When I worked in government in Canada, for example, there was interest in doing targeted outreach to indigenous populations to inform them about benefits they were entitled to. That’s a positive goal. But, given the history, it also raises sensitive questions about what data is collected, how you segment people and what’s appropriate.

But then, in areas like medical research and pharmaceuticals, there are more mature rules about what data can be collected, how it can be reused and what happens downstream. Advertising doesn’t yet have that same level of guardrails, but it can learn from those processes.

For example, be very clear about the purpose and context of data collection, think hard about downstream use and, where possible, look at ways to reduce your reliance on sensitive personal data.

With so much still in flux, what does “good” AI governance look like today?

So many technology systems incorporate AI. There might be a system update, and all of a sudden it’s like, “Hey, you have an agentic AI chatbot now.” So, first, you need to know where AI is actually being used in your organization.

From there, you need to define what good looks like for your organization in terms of policies, standards and internal principles, and then have a governance mechanism with real responsibility for decisions and oversight.

You also need to consider potential harms and impacts and how each use actually affects people, not just look at the risk categories on a checklist. That should feed into the technical and data safeguards you have in place. Finally, you have to understand your compliance obligations in the jurisdictions you operate in, whether that’s disclosure requirements, recourse mechanisms or other obligations.

But I see this as an opportunity for AI governance professionals, whether you come from privacy, cybersecurity or data governance, to be the ones who shine a light on implementation challenges. It’s a chance to do more than just think about it as a check-the-box exercise.

Answers have been lightly edited and condensed.

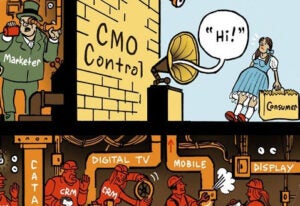

🙏 Thanks for reading! As always, feel free to drop me a line at allison@adexchanger.com with any comments or feedback. And say “hi” to this not-so-little guy, who’s clearly busy getting his company’s AI and data governance house in order.

P.S. If you haven’t snagged your ticket to Programmatic AI yet, whatcha waiting for? I’ll be moderating a chat on May 19 with Nikhil Kolar, Microsoft AI’s VP of product for publishers. We’ll be getting into some weighty stuff, including how to rebuild publisher value for the agentic web, no less. See you in Las Vegas!