“The Sell Sider” is a column written by the sell side of the digital media community.

Today’s column is written by Michael Weaver, senior vice president of business growth and development at Al Jazeera Media Network.

Following the 2016 presidential election, the impact of so-called “fake news” reverberated around the world. As a result, society called on platforms such as Facebook and Twitter to take responsibility for their roles in disseminating news – fake or otherwise – to billions of people.

For the most part, the platforms punted, leading to the rise of a new type of content intermediary. These companies, many of which offer their services directly to Facebook and Twitter, judge and report on the “trust and accountability” of today’s news outlets.

Unfortunately, there are as many lingering questions with their capabilities and interests as there are with Facebook and Twitter. And their emergence is taking the onus off the platforms to do better.

Diffusion of responsibility

In the aftermath of the 2016 presidential election, the depth of the major social platforms’ identity crises was laid bare. Mark Zuckerberg publicly clung to the notion, even in congressional testimony, that Facebook is a technology company, not a media company. There’s no reliable technological mechanism for eliminating fake news that aligns with the platforms’ identities as tech companies. So they’ve turned elsewhere for editorial judgments.

Facebook works with an evolving roster of more than three dozen external fact-checkers to police the content on its platform, and new options are emerging every day. While individual publications, such as the Associated Press, NPR and Politico, have formed their own fact-checking arms, independent organizations have emerged to take on the task.

Groups such as OpenNews, NewsGuard and the American Press Institute’s Accountability Journalism and Fact-Checking Project have sought to fill this role independently. They pride themselves on their teams of journalists and analysts who assess news organizations based on their credibility and transparency.

These intermediaries exist because platforms like Facebook and Twitter have abdicated their responsibility as media companies. Their emergence also raises an important question: Who watches the watchmen?

No more third-party scapegoats

These startups may take money from all manner of questionable sources, and those interests may impact their ability to serve as unbiased mediators of truth. As intermediaries, they disperse rather than solve the editorial judgment challenges that existed within the platforms.

We have a right and obligation to explore conflicts of interest that impact the dissemination of information, but we must not let it distract from the core problem: the platforms’ own culpability in creating and failing to address this problem on their own.

As long as major platforms refuse to take responsibility for the content on their properties, they must bear the editorial responsibility that comes with being distributors of news. Conversely, as a society and, particularly, publishing professionals, it’s time for us to direct the hardest questions toward the true source of the problem: the platforms as news distributors, not the third-party arbiters of news quality.

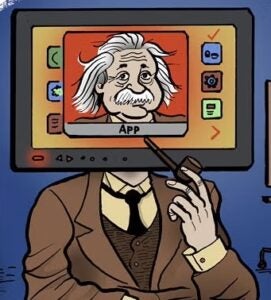

The problem of fake news is endemic on social platforms when they render news from multiple sources into a uniform feed and present rigorous journalism next to user-generated content. There has always been lots of misinformation out there. What’s dangerous about this mutation is when it looks and appears like legitimate news on social platforms.

The best fix for fake news isn’t to apply outside checks to the platforms, but to deposition platforms as the primary distributors of news information. Platforms can serve a role in driving awareness and traffic back to media properties, but as long as news is consumed on platforms, the risk of conflating the real and the fake will persist. No amount of third parties will change that.

Follow Al Jazeera (@AJENews) and AdExchanger (@adexchanger) on Twitter.