“Data-Driven Thinking” is written by members of the media community and contains fresh ideas on the digital revolution in media.

“Data-Driven Thinking” is written by members of the media community and contains fresh ideas on the digital revolution in media.

Today’s column is written by Rémi Lemonnier, co-founder at Scibids.

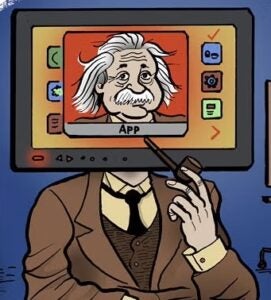

Machine learning (ML) is a very trendy term, raising huge expectations on one end and creating fantastic fears on the other.

Even if there is a lot of “ML-washing” among the ad tech industry, there is no doubt that it is and will continue reshaping the digital advertising ecosystem. A recent Juniper Research study predicted machine-learning algorithms would generate some $42 billion in annual ad spend by 2021, up from an estimated $3.5 billion in 2016.

Following the steps of quantitative finance, real-time bidding is transitioning from a manual data analysis domain to a purely mathematical one. I am not referring to single cost-per-click or cost-per-action optimization at the campaign level, but holistic solutions that will take into account multiple KPIs and media plan constraints for a given advertiser.

Add on top of that first-price auctions, header bidding and generalization of complex multitouch attribution models and we have the germs of a revolution. Here’s how to avoid becoming the next Marie Antoinette.

If you are a publisher, you will need to be selected for each campaign by the impartial algorithm – there will be no more guaranteed seats in the acquisition whitelist. Publishers that invest in impactful and viewable ad slots and enrich their bid requests with highly relevant data will concentrate the money flow, while others will see their revenues decrease. Anti-fraud algorithms will become more sophisticated, and black-hat players will get chopped off all major platforms.

If you are a data provider, be prepared: Hand-picking data segments and targeting them in dedicated tactics will become obsolete. Each advertiser will have hundreds of data segments to choose from, and the algorithm will automatically define the value for each segment depending on the context.

Since algorithms will seamlessly reason in terms of total amount (media plus data) they can pay for an impression, data will have to be priced very carefully. Using ML to capture true real-time intent will be essential to thrive in this environment. Data providers struggling to demonstrate that their segments are significantly correlated to conversions will face serious difficulties.

If you are a trading desk, you will have to learn how to speak fluently to the algorithm to best translate your client needs in this new language. Only by understanding what is going on under the hood will you be able to guide the algorithm toward true performance. Forget, for instance, the long hours spent to manually blacklist the underperforming domains or geolocations; the algorithms will be much better than any human at identifying them.

Worse, those monovariable analyses will suboptimally reduce the number of available contexts. For instance, in the context of manual trading it is usually a good decision to stop campaigns between midnight and 6 a.m. But in an algo trading setting, that would degrade the overall performance by excluding the few night shoppers the algo would have been able to identify. Those who hang on manual bid optimization or don’t acquire a deep understanding of their new tools will ultimately lose a lot of budget.

All of this is a fundamentally good news for the industry, since advertisers will get more for their buck, which will translate into increased budget for programmatic players.

Follow AdExchanger (@adexchanger) on Twitter.