“Data-Driven Thinking” is written by members of the media community and contains fresh ideas on the digital revolution in media.

“Data-Driven Thinking” is written by members of the media community and contains fresh ideas on the digital revolution in media.

Today’s column is written by Augustine Fou, digital strategist and independent ad fraud researcher.

The ways that malware-compromised PCs can cause ad fraud, including fake ad impressions, fake clicks, ad injection and cookie stuffing, have been documented over the years.

More recently, companies like Forensiq showed video proof of how malware on a single PC can rack up 10,000 fake ad impressions per day by running in the background. Bots can do sophisticated things and emulate virtually everything a human can do when surfing the web.

Efforts to optimize digital ads via viewability are certainly not enough to make a dent in the amount of fraud. Even if the ad is 100% viewable, it is still useless to the advertiser – and fraudulent – if the user that caused it to load was a bot, not a human.

That is why solving the nonhuman traffic bot problem should come first, before tackling other optimization opportunities, such as viewability and targeting. Sometimes, optimizing for viewability may actually send more ad dollars to bad guys, who can make it appear that their websites offer 100% viewability by stacking all ads above the fold.

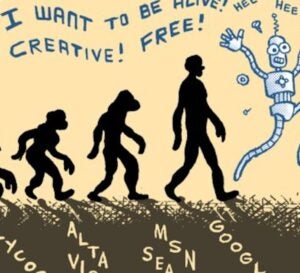

But detecting nonhuman traffic or bots is an even harder problem than viewability, because the bad guys’ bots don’t declare themselves honestly. They do not say they are Googlebot, Bingbot, MSNbot or Facebookbot in the user agent. And they are not among the 10,000 or so known crawlers and bots.

The sophisticated bots do everything they can to hide and avoid detection by pretending to be normal browsers, such as Internet Explorer or Safari. They pass fake parameters, such as browser version, screen resolution and geolocation, and they keep cookies and visit a pattern of websites to build up a targeting profile. They even create fake human-like actions, such as mouse movement, page scrolling and clicks.

Bots In The Henhouse

In addition to malware bots on PCs, there has been a dramatic increase in the use of headless browsers and mobile simulators, “spun up” in data centers to commit the most common forms of ad fraud: generating fake display, mobile and video ad impressions or creating fake clicks on search, video and social ads. About 67% of bots come from residential IP addresses, with the rest coming from data centers, telecom providers via mobile traffic and other channels, according to a recent White Ops/ANA study. The ratio of bots coming from data centers vs. residential IP addresses is expected to rise as consumers upgrade their browsers to newer, more secure versions and avoid reinstalling compromised toolbars and browser plugins.

However, in the cloud (data centers), millions of bots can be created and shut down at will. The bad guys use these bots to create vast amounts of fake traffic, fake display and video ad impressions and fake search ad clicks, without the need for unsuspecting humans to accidentally download malware or leave their home computers on 24/7.

Bad guys also use advanced bots to add items to shopping carts, deliberately abandon them and then attract premium retargeting ads. With mobile simulators designed to test apps before launch, bad guys can do things like download and install mobile apps (app install advertising), play mobile games to buy virtual goods or click on ads, then enter mobile search terms and click on mobile ads.

Key Metrics: ‘Humanness’ Of Users, Visits And Traffic

Detecting bots is hard. Antifraud vendors that estimate bot activity at only 1% to 3% of all impressions aren’t detecting the vast majority of bad guy bots, which won’t match any known bot list they have procured. Others are using big data analytics, machine learning and heuristics on network traffic to deduce which visits or cookies are bots.

Advertisers and their agencies can take several actions to combat bad bots immediately. Consider the concept of “lopping off” the lowest 10% of bad traffic, impressions or visits, which are not “converting” anyway. Identify which websites generate lots of ad impressions, traffic or clicks without engagement or measurable conversions.

Add the worst-performing 10% into the blacklist that all advertising venues should obey and don’t serve any ads on those sites. Don’t buy traffic or even clicks, such as those from content syndicators – those are not humans visiting your site. Also, refuse to serve ads to any users whose IP addresses are data centers, because normal human users don’t access the Internet through Amazon Cloud.

And finally, measure the “humanness” of the ad inventory and site visits – that is, the ratio of humans vs. bots that caused the ads to load and that arrived on your website, respectively. Armed with this data, it’s possible to lop off the lowest 10% of ad exchanges that carry “dirty” inventory and send nonhuman visits to destination sites.

As is obvious, showing ads to humans, instead of bots, gives marketers the most surefire way to improve the performance of their digital advertising.

Follow Augustine Fou (@acfou) and AdExchanger (@adexchanger).